|

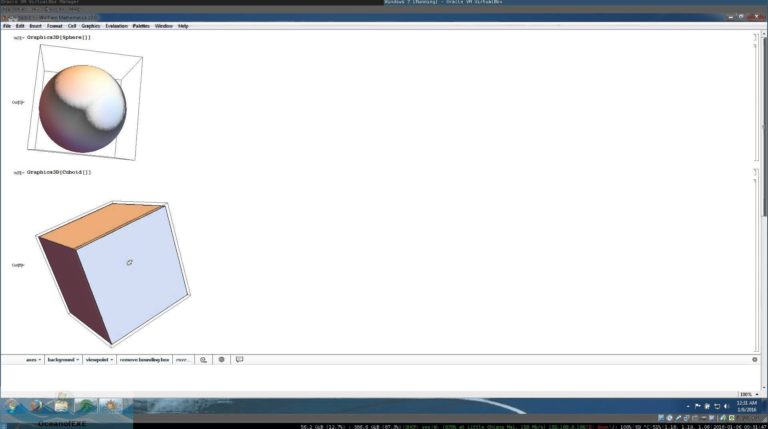

A neural net just learns from real-world examples or experience, whereas a traditional programming language is about giving a precise abstract specification of what in detail a computer should do. I wonder what that was trained on….īy the way, people sometimes talk about machine learning and neural nets as being in opposition to traditional programming language code. This gets the symbolic representation of GPT-2 from our repository: (Also, in Version 12.1 there’s support for the new ONNX neural net specification standard, which makes it easier to import the very latest neural nets that are being published in almost any format.) And the way things are set up, it’s immediate to use any of these nets. There are 25 new types of neural nets in our Wolfram Neural Net Repository, including ones like BERT and GPT-2. In Version 12.1 we’ve continued our leading-edge development in machine learning. And the other big thing is that our whole neural net system is symbolic-in the sense that neural nets are specified as computable, symbolic constructs that can be programmatically manipulated, visualized, etc. So that means that we have pretrained classifiers and predictors and feature extractors that you can immediately and seamlessly use. The second big thing is that we’ve been curating neural nets, just like we curate so many other things. First, we’ve emphasized high automation-using machine learning to automate machine learning wherever possible, so that even non-experts can immediately make use of leading-edge capabilities.

We introduced the first versions of our flagship highly automated machine-learning functions Classify and Predict back in 2014, and we introduced our first explicitly neural-net-based function- ImageIdentify-in early 2015.Īnd in the years since then we’ve built a very strong system for machine learning in general, and for neural nets in particular. Of course, we were involved with it even a very long time ago. Machine learning is all the rage these days.

Machine Learning & Neural Networks GANs, BERT, GPT-2, ONNX, … : The Latest in Machine Learning (March 2020) The contents of this post are compiled from Stephen Wolfram’s Release Announcements for 12.1, 12.2, 12.3 and 13.0. Here are the updates in data and data science since then, including the latest features in 13.0. Two years ago we released Version 12.0 of the Wolfram Language.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed